Acquiring an exact-match domain (EMD) of a high traffic keyword could have turned out to be a very lucrative business which granted some sort of unfair advantage in Google search results. So Google’s Matt Cutts & friends decided to go on a witch-hunt against this shady SEO practice to target trashy EMD sites.

Acquiring an exact-match domain (EMD) of a high traffic keyword could have turned out to be a very lucrative business which granted some sort of unfair advantage in Google search results. So Google’s Matt Cutts & friends decided to go on a witch-hunt against this shady SEO practice to target trashy EMD sites.

But it isn’t such a simple task as one may think… the big challenge is to effectively sort out the real crappy EMD sites and demote only those, while high-quality EMD sites should still keep their prior well-deserved rankings without fall into the anti-spam crossfire.

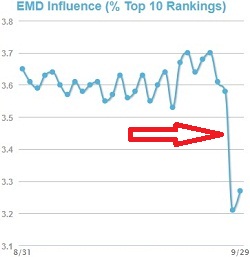

First, let’s try to grasp the overall affect of this new algorithm. Matt Cutts ambiguously stated it will affect about 0.6% of queries in English “to a noticeable degree.” Here’s how the new EMD algorithm is being reflected on the EMD Influence metric in MozCast:

See how the EMD influence chart has dropped sharply on September 28th? In some niches that is some sudden hefty shakedown… but has the new algorithm managed to filter out solely the low quality sites specifically? Let’s dive deeper inside.

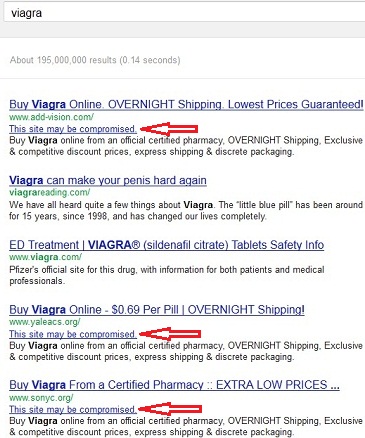

Historically, [viagra] is a query Google has always struggled with. There’s a humongous amount of sites which uses spamming, blackhat SEO tactics and of course exact-match domains to rank high for this money keyword query and its derivatives. Here’s what comes up today (October 1st) when searching for viagra:

I’m so happy I didn’t really tried to buy Viagra online because Google search results are incredibly poor for this query: Viagra’s official website is only third, the first top two results are utterly miserable sites and Google itself warns that 3 out of the top 5 sites may be compromised (marked with red arrows)! Lame, Google… really really lame…

However, one lousy query’s search results is not suffice enough to deem this update into a failure. To retrieve more information I’d combed Google’s Webmaster Central forum, which currently explodes with related threads. My overall impression is quite disturbing…

While I did found many spammy and poor sites which apparently ranked high before the EMD update and indeed deserved to be further demoted, I also revealed a significant portion of high-quality sites as victims. One prominent complain is by the webmaster of Pregnancy.co.uk:

As of yesterday it has been completely de-listed for 100% terms – the site still shows up if you type in the web address – the site had very good user metrics and we have spent a lot of time acquiring links from various government bodies in the UK.

Pregnancy.co.uk is a pretty awesome resource for pregnancy-related information and I certainly wouldn’t consider it as a low-quality website… but it still got hit intensely by the EMD update. After discovering a few more oracular penalized websites, that leads me to the obvious question- how is Google’s EMD update weighs what considers as low or high quality website?

Matt Cutts says the update is unrelated to the Panda or Penguin updates, but isn’t it THEIR JOB to filter low quality websites? It just doesn’t sounds reasonable that the EMD update operates alone… I’m assuming that Cutts simply intended to state that there weren’t any Panda/Penguin updates or refreshes in addition to the EMD update.

In fact, I think that the EMD update is heavily relies on data from the Panda/Penguin updates- if an exact-match domain site has been marked by the Panda or Penguin, there’s a big chance it’ll be demoted by the EMD update as well. That also correlates with some of the webmaster’s complaints on the forum, which were previously hit by the Panda or Penguin.

If indeed my speculations are correct, then the only way to escape the EMD update’s claws is to overcome the Panda/Penguin first. That sounds much more reasonable to me. I would even dare to say that throwing down another quality filter unconnected to the already two most influential quality algorithms in recent years is pretty plain stupid…

And that can’t be… right…?