Yesterday Google posted in its Webmaster Central Blog that from now, we can use the Fetch as Googlebot Feature in Webmaster Tools to make Google find and crawl new pages and even an entire sections faster than before- Within a day! Noticed that it doesn’t mean it will get indexed, only crawl it…

How Does It Work?

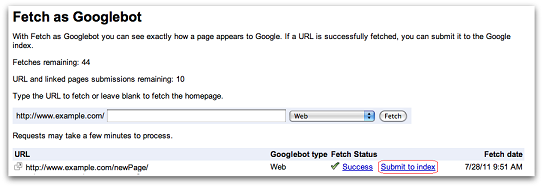

First, you need to have a valid Webmaster Tools account. Enter the Fetch as Googlebot feature from the Diagnostics section. Here you can type URL from your website you want to fetch as Googlebot. After it successfully fetched you would see the following screen:

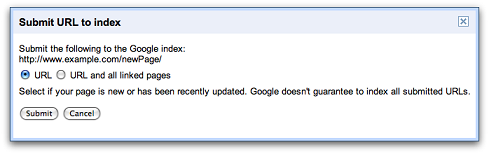

If you click on the “Submit to index” link you will see the following box:

URL- Submit only the individual URL you have fetched before. Limited for 50 submissions each week.

URL and all linked pages- Submit the individual page and all the pages it is linking to (more thorough crawl of your site). Limited for 10 submissions each month.

Why It doesn’t Guarantee Indexing Even If Crawling?

The process of crawling is different from indexing. Crawling is reading the content of the page, then it is “evaluated” and only then the page is indexed. Examples when page won’t be indexed- Very thin of content or its defined as noindex in the meta tag robots.

When To Use It When Working From Home?

Using this feature is basically saying to Google “Hi, i have added new pages or made some changes on existing ones and you haven’t noticed it yet!” and get your additions/updates crawled faster. Therefore, using this feature for small changes you have made or even added a new section for your online business is idle.

Pay attention that it doesn’t substitute any other method like sitemap, RSS feed and obviously not the natural crawling process! This is simply an assisting tool to work with Google better and faster.

How Is It Different From The Add your URL Feature?

Google also have a public Add your URL to Google but it isn’t that effective as the Fetch as Googlebot feature which is much more considerable and prioritized.