One of the most important site analysis tools besides the basic analytic tool (hopefully) installed on each website is Google Webmaster Tools. It is basically constitutes as the site’s ambassador at Google and offers a direct connection to the site-related search engine data.

Recently, Google made some important changes and updates that affects this connection and more specifically, to the “Crawl errors” section which received a major overhaul. This whole section has been completely remodeled in order to “make it even more useful”, as Google stated.

The new changes suppose to grant less savvy webmasters a more simple interface which will allow them to understand their crawling errors better. The section has been now divided into two segments- “Site Errors” and “URL Errors”. Let’s go in deeper:

Site Errors

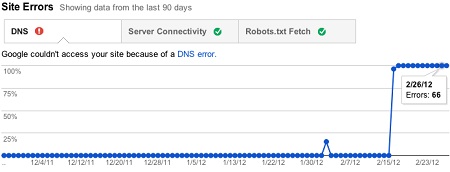

These kind of errors are affecting the whole site and don’t have specific URL-orientation. These are the different site errors categories that will appear at the top of the Crawl errors page:

- DNS- All the errors that relates to the domain name system. Unlike the prior version, the webmaster would only be notified when there will be a site wide error and NOT a specific URL problem (which is kind of disappointing).

- Server Connectivity- All the errors that relates to the server. Again, the webmaster would only be notified if there’s a site wide connectivity error and NOT if there’s a connectivity issue with specific URLs (once more, disappointing).

- Robots.txt Fetch- Crawling errors which relates the the robots.txt file. Now, only when there will be an error accessing the file ITSELF the webmaster will be notified and NOT which pages are blocked by the robots.txt file. Google are saying that soon they will show pages blocked by robots.txt over the “Crawler access” section.

Here’s an example of how this segment would like like whenever there are DNS-related errors:

URL Errors

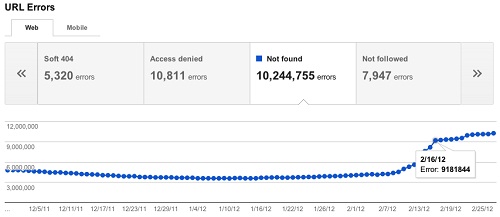

These errors affecting specific URLs exclusively and don’t necessarily relates to a site wide issue. These are the different URL errors categories that will appear below the site errors segment:

- Server Error- All specific URLs the Googlebot had a problem accessing to (essentially the 50X problems).

- Soft 404- Specific URLs that Google detects as error but don’t return a proper 404 response code (which Google can mistakenly index).

- Access Denied- URLs that return a 401 response code, which basically means that a login is required to see these pages.

- Not found- URLs that Google can’t find (mostly returns a 404 response code).

- Not Followed- URLs that Google finds problem following such as redirect errors.

- Other- All other specific URL-related crawling errors.

Here’s an example of how this segment would like whenever there are multiple URL errors:

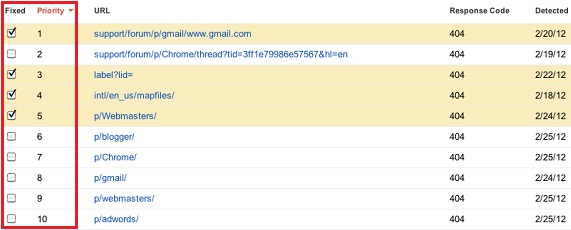

“Fixed” and “Priority”

Two new columns have been added the list of errors (on the URL Errors segment):

Fixed- The webmaster now has the possibility to mark a certain error as “Fixed” after the problem has been, well, fixed. Even though Google are stating that this is a way to “let them know” the issue has been fixed, I think it is JUST a way to remove the certain error from the list and it doesn’t indicate to Google to crawl back the URL.

Priority- This is a very interesting new column. It is ranking all the errors based on if the user can actually do something to solve it (inclusion in the Sitemap, broken link, server software, 301 redirect) and its overall effect (how many pages linking to the URL, how much search traffic it received).

Here are the two new columns in action:

Overall, the whole remodeling of the “Crawl errors” section is definitely make things a bit simpler and more friendly, however, they might be now missing some key issues that were available in the previous version like DNS and server connectivity for specific URLs. Robots.txt blocked pages specification is also still missing and yet to be detailed in another place on Webmaster Tools.